Conditional entropy

Measure of relative information in probability theory / From Wikipedia, the free encyclopedia

Dear Wikiwand AI, let's keep it short by simply answering these key questions:

Can you list the top facts and stats about Conditional information?

Summarize this article for a 10 year old

SHOW ALL QUESTIONS

In information theory, the conditional entropy quantifies the amount of information needed to describe the outcome of a random variable

and

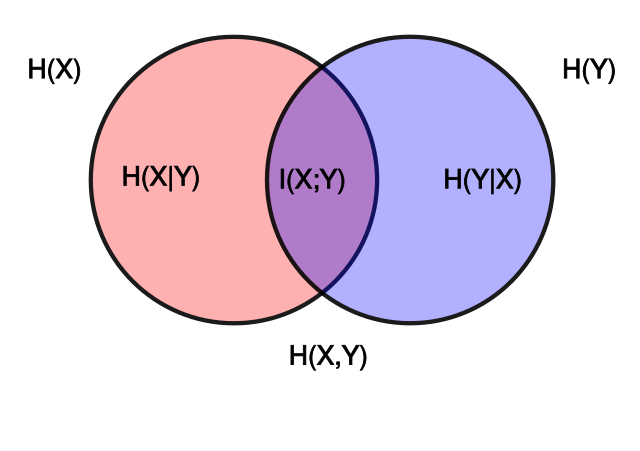

and  . The area contained by both circles is the joint entropy

. The area contained by both circles is the joint entropy  . The circle on the left (red and violet) is the individual entropy

. The circle on the left (red and violet) is the individual entropy  , with the red being the conditional entropy

, with the red being the conditional entropy  . The circle on the right (blue and violet) is

. The circle on the right (blue and violet) is  , with the blue being

, with the blue being  . The violet is the mutual information

. The violet is the mutual information  .

.