Activation function

Artificial neural network node function / From Wikipedia, the free encyclopedia

Dear Wikiwand AI, let's keep it short by simply answering these key questions:

Can you list the top facts and stats about Activation function?

Summarize this article for a 10 year old

SHOW ALL QUESTIONS

For the formalism used to approximate the influence of an extracellular electrical field on neurons, see activating function. For a linear system’s transfer function, see transfer function.

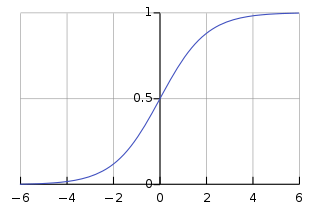

The activation function of a node in an artificial neural network is a function that calculates the output of the node based on its individual inputs and their weights. Nontrivial problems can be solved using only a few nodes if the activation function is nonlinear.[1] Modern activation functions include the smooth version of the ReLU, the GELU, which was used in the 2018 BERT model,[2] the logistic (sigmoid) function used in the 2012 speech recognition model developed by Hinton et al,[3] the ReLU used in the 2012 AlexNet computer vision model[4][5] and in the 2015 ResNet model.